Ghost Dependencies: An Emerging Supply Chain Security Threat in the Agentic Coding Paradigm

Author: Tianchu Chen of Tencent Xuanwu Lab

0x00 Introduction

As the capabilities of Large Language Models (LLMs) continue to advance, AI-assisted software development is evolving from the “Copilot” paradigm—where humans write code and AI provides completions—to the “Agentic Coding” paradigm, where AI autonomously makes decisions and executes actions. In this new paradigm, AI is no longer merely a code generation assistant but has transformed into an intelligent agent capable of independently planning tasks, selecting technology stacks, manipulating file systems, and even executing commands.

However, this transfer of control introduces new attack surfaces: AI Agents make decisions on behalf of users, but these decisions are not always secure. Through extensive testing and analysis of mainstream Agentic Coding tools and their underlying LLMs, we have identified several prevalent AI decision-making risks. Among these, a category of risks related to the software supply chain can produce persistent and covert impacts. We have termed this phenomenon “Ghost Dependencies.”

Through further analysis and experimentation, we have confirmed that the “Ghost Dependencies” risk is pervasive in real-world Agentic Coding workflows. This risk not only leads to instability in AI-generated code quality but also introduces tangible security threats: attackers can analyze the “Ghost Dependencies” behavioral patterns of specific models in specific scenarios, then leverage known N-day vulnerabilities or supply chain poisoning techniques to inject malicious code into AI-generated projects.

To address these risks, we propose a Pre-Execution Hooks-based defense architecture for AI supply chain decision risks and have developed a plugin-based solution: Atuin. This protection solution is now available on the official Tencent Cloud CodeBuddy Code plugin marketplace.

0x01 Technical Background

In traditional software development workflows, human developers are the primary agents who generate and execute decisions, making security responsibility boundaries relatively clear. However, in Agentic Coding scenarios, IDE and CLI environments provide AI Agents with the capability to autonomously generate and execute decisions. A typical workflow proceeds as follows:

- Intent Understanding: The AI processes vague user instructions, such as: “Build a Django website.”

- Decision Generation: During its reasoning process, the AI autonomously decides which third-party libraries to introduce and which versions to select.

- Decision Execution: The AI automatically generates dependency manifest files (requirements.txt, package.json) and executes commands like

pip installornpm installto complete the download and installation process.

In such workflows, the AI Agent is the true entity generating and executing decisions, with users no longer participating in the process. This transfer of control brings about a change in the threat model: attackers attempting supply chain attacks no longer need to deceive human developers. Instead, they can exploit inherent deficiencies in LLMs to inject malicious code while users trust the AI Agent and allow it to execute supply chain decisions autonomously.

0x02 Threat Model

In Agentic Coding scenarios, AI models must autonomously complete supply chain decision-making tasks. During our testing of mainstream Agentic Coding tools and industry-leading LLM models’ supply chain decision processes, we discovered a prevalent category of supply chain security risks. These risks arise from the probabilistic prediction nature of LLMs, and we have named them “Ghost Dependencies”:

- R1 - “Ghost Versions”: LLMs tend to introduce outdated components that appear frequently in their training data, rather than the latest versions.

- R2 - “Ghost Package Names”: We further discovered that LLMs have a probability of “fabricating” non-existent package names. This “hallucination” behavior is model-specific and predictable.

Based on these security risks, we define the following two supply chain threats targeting the Agentic Coding development paradigm:

- T1 - N-day Vulnerability Exploitation via Outdated Component Versions: Attackers exploit the LLM’s behavioral pattern of introducing outdated component versions, scanning AI-generated projects for N-day vulnerabilities and exploiting them.

- T2 - Targeted Poisoning of Hallucinated Package Names: Attackers leverage the predictability of LLMs’ “package name hallucinations,” predicting and preemptively registering package names that specific LLM models might “fabricate.” When the Agent produces the same hallucination, it automatically downloads the malicious package.

It should be noted that targeted poisoning of package names is not an entirely new threat unique to Agentic Coding. Before the concept of “AI coding” emerged, there were numerous supply chain poisoning attack cases where attackers attempted to exploit human developers’ typos by registering confusingly similar names in public package repositories and planting malicious code. Although “package name poisoning” in public repositories is a long-standing security threat, the proliferation of the Agentic Coding paradigm has significantly concentrated and amplified its severity:

- LLM “hallucinated package names” are more predictable: Compared to random typos by human developers, specific LLMs tend to produce patterned, predictable “hallucinated package names.” This heightened predictability drastically reduces the cost of poisoning attacks.

- AI Agent download and installation actions are executed automatically without human intervention: In traditional human-led development workflows, developers encountering unfamiliar components can verify package name accuracy through official websites or repositories. In contrast, in AI-led Agentic Coding workflows, decisions are autonomously generated by LLMs and typically executed automatically without human intervention. This shift in control makes “hallucinated package name” poisoning attacks against AI Agents more difficult for humans to detect and prevent in advance.

0x03 Experiments and Analysis

We selected a model (hereinafter referred to as “Model A”) as our test subject. This model approaches frontier-level performance in benchmarks for mathematics, coding, and tool calling, ranks among the top five in API call volume on OpenRouter, and is one of the mainstream coding models currently in use.

During our multiple invocations and tests of Model A, we discovered prevalent “Ghost Dependencies” risks:

- “Ghost Versions” risk present in nearly all Python development scenarios: We found that, constrained by training dataset distribution, in Python web development scenarios, the requirements.txt output by Model A almost always contains outdated component versions released in 2023 or even earlier.

- “Ghost Package Names” risk with trigger probability up to 40% under specific complex requirements: We further presented Model A with more complex requests involving specific long-tail requirements, such as: requesting the model to introduce components that could enhance the visual effects of a web project. In our test scenarios, Model A’s hallucination rate for fabricating package names reached as high as 40%, with these “package name hallucinations” concentrated in several specific patterns that are easily predictable by attackers.

Our model testing demonstrates that in “clean” development environments without malicious prompts or malicious code injection, simply deploying a Coding Agent normally and allowing it to autonomously make supply chain decisions results in high trigger rates and predictability for both T1 and T2 threats.

To further analyze the real-world impact of these threats, we designed several experiments within actual Agentic Coding workflows:

- N-day Vulnerability Exploitation Attempt with Outdated Versions: Based on Model A’s tendencies regarding outdated component versions, we used a mainstream Agentic Coding development environment to have AI fully build and deploy a Python web application. During development, because Model A introduced an outdated component version with a critical SQL injection vulnerability, this web application was “shipped with a built-in backdoor.” Attackers exploiting this N-day vulnerability could remotely steal sensitive information from the database.

- Registration Attempt for Hallucinated Package Names: For one of Model A’s high-frequency “hallucinated package names,” we attempted to register this name in a public package repository, using multiple version numbers that Model A outputs with high probability. After excluding non-installation download counts caused by mirror server synchronization, within a 20-day observation window, this “hallucinated package name” was downloaded over 500 times, approaching one-thousandth of the “original” package’s download count and significantly higher than unused packages in the repository.

These experiments demonstrate that in real-world Agentic Coding development environments and deployment environments for AI-generated projects, external attackers can exploit models’ “Ghost Dependencies” risks to create covert, persistent, and severe threats at low cost: scanning AI-generated projects for component N-day vulnerabilities enables theft of sensitive information; registering “hallucinated package names” in public repositories and uploading malicious code enables a “poison once, profit continuously” attack pattern.

Note: Our experimental process adhered to the “minimum impact” principle: N-day vulnerability exploitation attempts were conducted in controlled simulated environments; when registering “hallucinated package names,” we only uploaded necessary metadata such as package names and versions, without any executable code; package download statistics were sourced from public datasets provided by the public package repository; after completing our observations, we deleted all package versions of the “hallucinated package name” from the public repository while retaining a placeholder for the package name, thereby preventing this package from being installed by hallucinating AI Agents in the future while also preventing the package name from being registered by malicious actors.

0x04 Defense Solution

Traditional code scanning (SAST) and dependency checking (SCA) are typically designed as security detection processes executed after code commit and before release. When the development paradigm shifts to Agentic Coding, since AI has already replaced users in making supply chain decisions and immediately executes risky operations such as downloading and installing third-party components, traditional defense methods suffer from significant latency disadvantages and cannot address the immediate threats caused by autonomous AI behavior.

To address this issue, we believe it is necessary to shift the defense boundary left from the traditional “post-commit” phase to the moment when the Coding Agent generates and executes decisions.

Based on this philosophy, we designed the Atuin plugin’s defense solution. This solution leverages the Pre-Execution Hooks capability of existing Coding Agents, intervening after the AI Agent makes a decision but before executing the specific action, to validate and eliminate security risks.

The solution’s workflow is as follows:

Pre-Execution Hook Trigger: Before the Agent attempts to write dependency manifest files (such as requirements.txt) or execute component installation-related commands (such as pip install), the Atuin plugin’s audit process is triggered.

Behavior Audit:

- Component Information Retrieval: Based on Atuin’s accumulated supply chain component database, component information is retrieved.

- Version Assessment: The selected version is compared against Atuin’s supply chain security risk database to detect whether it contains critical N-day vulnerabilities.

- Hallucination and Malicious Component Detection: Analysis is performed to determine whether the components the AI is attempting to install are known malicious components, and AI “package name hallucinations” are identified and excluded.

Intervention Decision: Based on the risk level of the behavior and the feasibility of silent remediation, Atuin decides on intervention measures:

- Allow: Risk-free actions are allowed to proceed directly.

- Silent Remediation: Replace versions known to have risks with the latest secure patch versions; correct hallucinated package names to actual package names.

- Retry: Inform the AI Agent of the risk and request it to try again.

- Block: When malicious component risks are detected, immediately terminate the operation and raise an alert.

0x05 Case Demonstrations

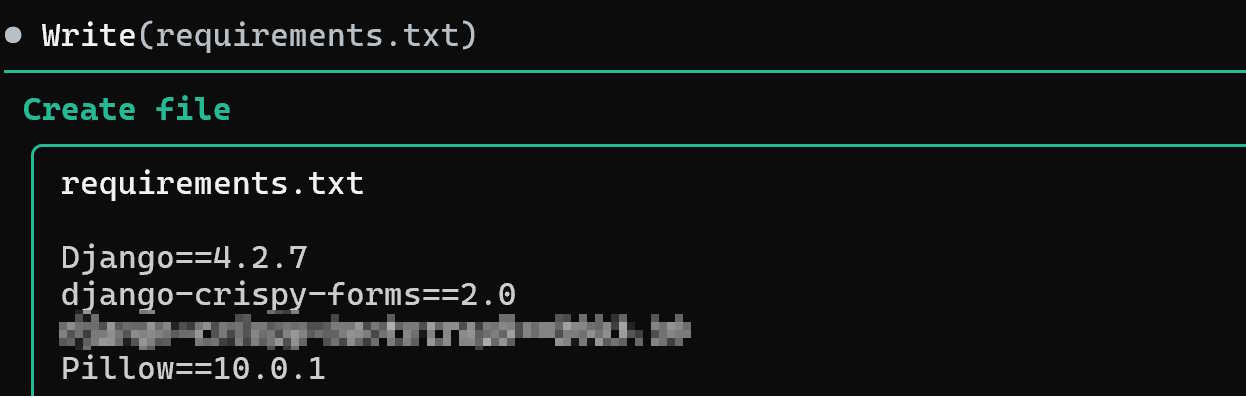

In an environment with the Atuin plugin enabled, a user requests the Coding Agent to generate a Python web website. The AI promptly generates a dependency manifest file (requirements.txt):

This dependency manifest introduces Django 4.2.7, which contains an SQL injection vulnerability, and a “hallucinated package name” fabricated by the AI that does not actually exist.

The Atuin plugin detects the issues before the requirements.txt file is written, replacing Django with the latest secure version and correcting the known “hallucinated package name” to the correct package name. The entire process requires no manual intervention, and after remediation is complete, control is returned to the Coding Agent to continue subsequent work.

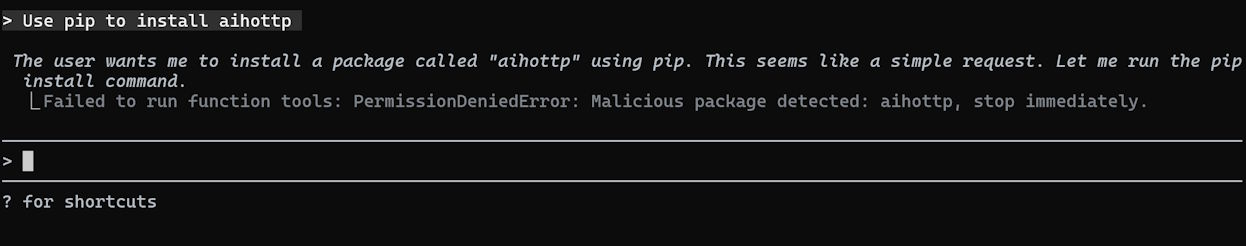

Typos made by human developers when entering prompts can also cause the Agent to automatically execute dangerous actions. When a user inputs “install aihottp package,” the AI is influenced by the user’s typo and attempts to install a poisoned package. The Atuin plugin detects the risk of executing malicious code before the installation command is executed, immediately intervening and automatically blocking the risky operation:

The Atuin plugin currently covers five major language ecosystems: Python, JavaScript, Go, Rust, and PHP. For different risk scenarios, the plugin adopts different strategies: confirmed safe operations are allowed to proceed; fixable issues are automatically replaced with secure versions; when automatic remediation is not possible, the AI is guided to make alternative selections; when malicious packages are detected, the entire operation is immediately blocked.

0x06 Future Directions

We are also exploring the application of various foundational capabilities accumulated by Xuanwu Lab to address other problems in AI Coding—

HaS Data Masking Technology

During the software development process, code repositories may contain large amounts of sensitive information. Hard-coded cloud service AK keys, database connection strings, authentication credentials for internal enterprise infrastructure, and even personal privacy data are often retained in code files and change records. In AI Coding scenarios, Agents automatically upload large code snippets from local repositories to cloud-based LLM APIs for inference during their work, causing confidential information to flow outside the enterprise security boundary and face the risk of being acquired by model providers.

Through over 2 years of exploration and refinement, Xuanwu Lab pioneered a “Semantic Isomorphic Tag-based LLM Data Masking Technology HaS (Hide and Seek).” Using only locally deployed small models, HaS can achieve “outbound data masking”: before local files are uploaded to cloud-based LLMs, it first analyzes and masks privacy data within them, replacing sensitive information such as API Keys with placeholder tags. Simultaneously, for data returned by cloud-based LLMs, “inbound restoration” is performed: HaS restores placeholder tags in the model output to the original sensitive information based on the previous replacement mappings.

We are continuously exploring the implementation forms of privacy protection capabilities in AI Coding scenarios.

Real-time Supply Chain Documentation Injection

Constrained by LLM training data distribution and training cutoff dates, AI coding assistants often produce substantial hallucinations in scenarios involving internal enterprise frameworks, long-tail third-party components, and cutting-edge domains: including fabricating non-existent APIs, using deprecated interfaces, and so forth.

Additionally, when “starting from scratch” to create projects, due to the lack of best practice examples in the context, AI coding assistants typically only aim for “just make it work,” randomly generating code that merely “runs” while exhibiting high randomness in code security, maintainability, and other aspects.

To address this challenge, the Atuin plugin will provide real-time supply chain documentation injection capabilities based on the extensive high-quality third-party component documentation and domain-specific development specifications accumulated through Xuanwu’s supply chain security research: when AI coding assistants use components from the supply chain, the Atuin plugin can retrieve the latest documentation, API definitions, and best practices for that component and inject them into the LLM’s context.

This feature will be released as a plugin update in the future.

0x07 Technical Capabilities Behind the Atuin Plugin

The Atuin plugin is derived from Xuanwu’s “Atuin” Software Space Mapping System.

The “Atuin” Software Space Mapping System has been under development by Xuanwu Lab since 2015. It possesses fully automated, large-scale detection capabilities for cross-platform automatic discovery, installation, and analysis of software across the entire internet. It incorporates artificial intelligence, semantic recognition, and various other technologies. It represents the world’s first implementation of a fully automated installation system for all software across the internet that requires no manual adaptation—a “search engine for the binary software space.”

Currently, “Atuin” has cataloged over 50 million cross-platform software applications and apps, as well as over ten million component and SDK samples across various platforms. “Atuin” has supported emergency response efforts for national cybersecurity authorities on multiple occasions. In 2017, it was selected as a Leading Technology Achievement at the World Internet Conference, and in 2025, it received the Special Prize for Technological Invention from the China General Chamber of Commerce Science and Technology Award.

0x08 How to Use?

Currently, the Atuin plugin has been released on the official CodeBuddy Code plugin marketplace by Tencent Cloud, available free of charge to all CodeBuddy Code users. Installation requires just one command:

1 | /plugin install atuin@codebuddy-plugins-official |

After installation and restarting CodeBuddy Code, the plugin will automatically run in the background, remaining completely transparent to the development workflow. It seamlessly integrates into existing AI coding workflows, enabling continuous monitoring and defense against supply chain risks without disrupting developers’ normal operations.